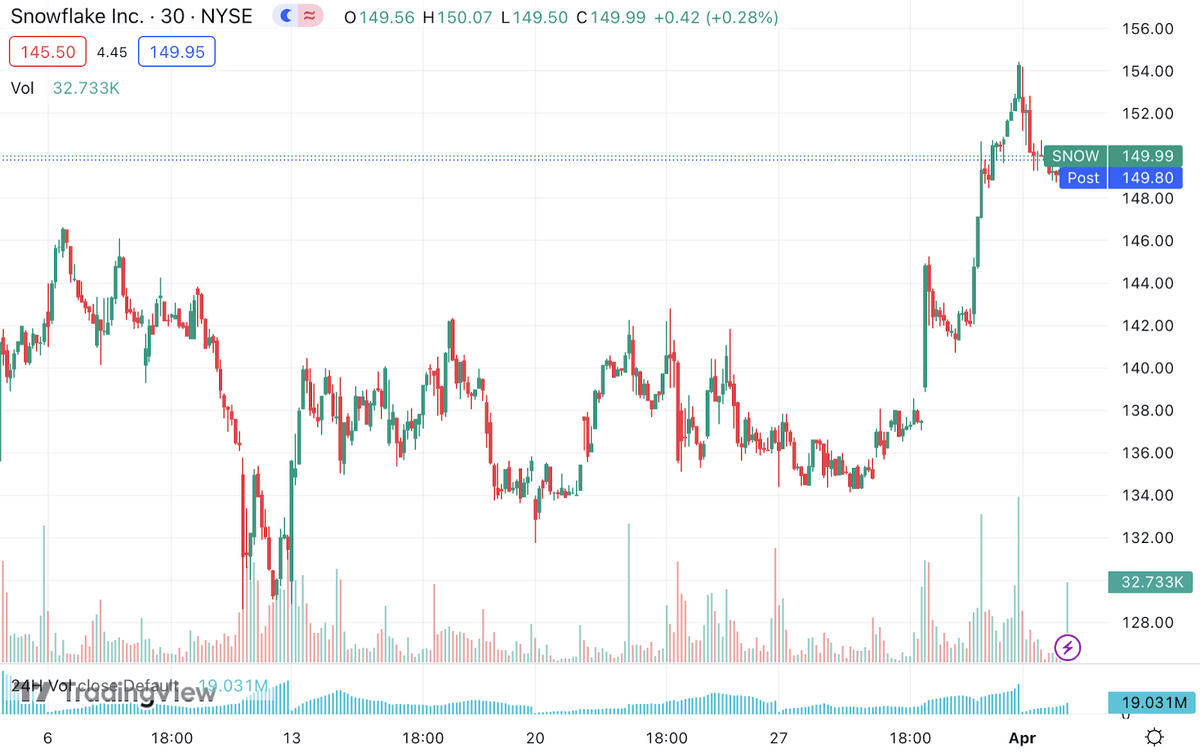

Databricks Stock Chart

Databricks Stock Chart - The requirement asks that the azure databricks is to be connected to a c# application to be able to run queries and get the result all from the c#. The datalake is hooked to azure databricks. Actually, without using shutil, i can compress files in databricks dbfs to a zip file as a blob of azure blob storage which had been mounted to dbfs. Here is my sample code using. Below is the pyspark code i tried. First, install the databricks python sdk and configure authentication per the docs here. I want to run a notebook in databricks from another notebook using %run. The guide on the website does not help. It is helpless if you transform the value. I am able to execute a simple sql statement using pyspark in azure databricks but i want to execute a stored procedure instead. Create temp table in azure databricks and insert lots of rows asked 2 years, 7 months ago modified 6 months ago viewed 25k times Actually, without using shutil, i can compress files in databricks dbfs to a zip file as a blob of azure blob storage which had been mounted to dbfs. Databricks is smart and all, but how do you identify the path of your current notebook? The requirement asks that the azure databricks is to be connected to a c# application to be able to run queries and get the result all from the c#. Also i want to be able to send the path of the notebook that i'm running to the main notebook as a. I am able to execute a simple sql statement using pyspark in azure databricks but i want to execute a stored procedure instead. Here is my sample code using. It is helpless if you transform the value. This will work with both. While databricks manages the metadata for external tables, the actual data remains in the specified external location, providing flexibility and control over the data storage. Below is the pyspark code i tried. Actually, without using shutil, i can compress files in databricks dbfs to a zip file as a blob of azure blob storage which had been mounted to dbfs. This will work with both. It is helpless if you transform the value. It's not possible, databricks just scans entire output for occurences of secret. Actually, without using shutil, i can compress files in databricks dbfs to a zip file as a blob of azure blob storage which had been mounted to dbfs. Below is the pyspark code i tried. It's not possible, databricks just scans entire output for occurences of secret values and replaces them with [redacted]. The requirement asks that the azure databricks. I want to run a notebook in databricks from another notebook using %run. Also i want to be able to send the path of the notebook that i'm running to the main notebook as a. It is helpless if you transform the value. While databricks manages the metadata for external tables, the actual data remains in the specified external location,. The guide on the website does not help. Below is the pyspark code i tried. Here is my sample code using. Databricks is smart and all, but how do you identify the path of your current notebook? While databricks manages the metadata for external tables, the actual data remains in the specified external location, providing flexibility and control over the. The guide on the website does not help. While databricks manages the metadata for external tables, the actual data remains in the specified external location, providing flexibility and control over the data storage. It's not possible, databricks just scans entire output for occurences of secret values and replaces them with [redacted]. The requirement asks that the azure databricks is to. The requirement asks that the azure databricks is to be connected to a c# application to be able to run queries and get the result all from the c#. First, install the databricks python sdk and configure authentication per the docs here. Also i want to be able to send the path of the notebook that i'm running to the. The datalake is hooked to azure databricks. While databricks manages the metadata for external tables, the actual data remains in the specified external location, providing flexibility and control over the data storage. This will work with both. It's not possible, databricks just scans entire output for occurences of secret values and replaces them with [redacted]. Actually, without using shutil, i. While databricks manages the metadata for external tables, the actual data remains in the specified external location, providing flexibility and control over the data storage. Actually, without using shutil, i can compress files in databricks dbfs to a zip file as a blob of azure blob storage which had been mounted to dbfs. Also i want to be able to. The guide on the website does not help. Create temp table in azure databricks and insert lots of rows asked 2 years, 7 months ago modified 6 months ago viewed 25k times Databricks is smart and all, but how do you identify the path of your current notebook? I want to run a notebook in databricks from another notebook using. Also i want to be able to send the path of the notebook that i'm running to the main notebook as a. Create temp table in azure databricks and insert lots of rows asked 2 years, 7 months ago modified 6 months ago viewed 25k times Here is my sample code using. First, install the databricks python sdk and configure. I want to run a notebook in databricks from another notebook using %run. This will work with both. First, install the databricks python sdk and configure authentication per the docs here. It's not possible, databricks just scans entire output for occurences of secret values and replaces them with [redacted]. The datalake is hooked to azure databricks. I am able to execute a simple sql statement using pyspark in azure databricks but i want to execute a stored procedure instead. The requirement asks that the azure databricks is to be connected to a c# application to be able to run queries and get the result all from the c#. Here is my sample code using. Also i want to be able to send the path of the notebook that i'm running to the main notebook as a. Databricks is smart and all, but how do you identify the path of your current notebook? Actually, without using shutil, i can compress files in databricks dbfs to a zip file as a blob of azure blob storage which had been mounted to dbfs. It is helpless if you transform the value.Can You Buy Databricks Stock? What You Need To Know!

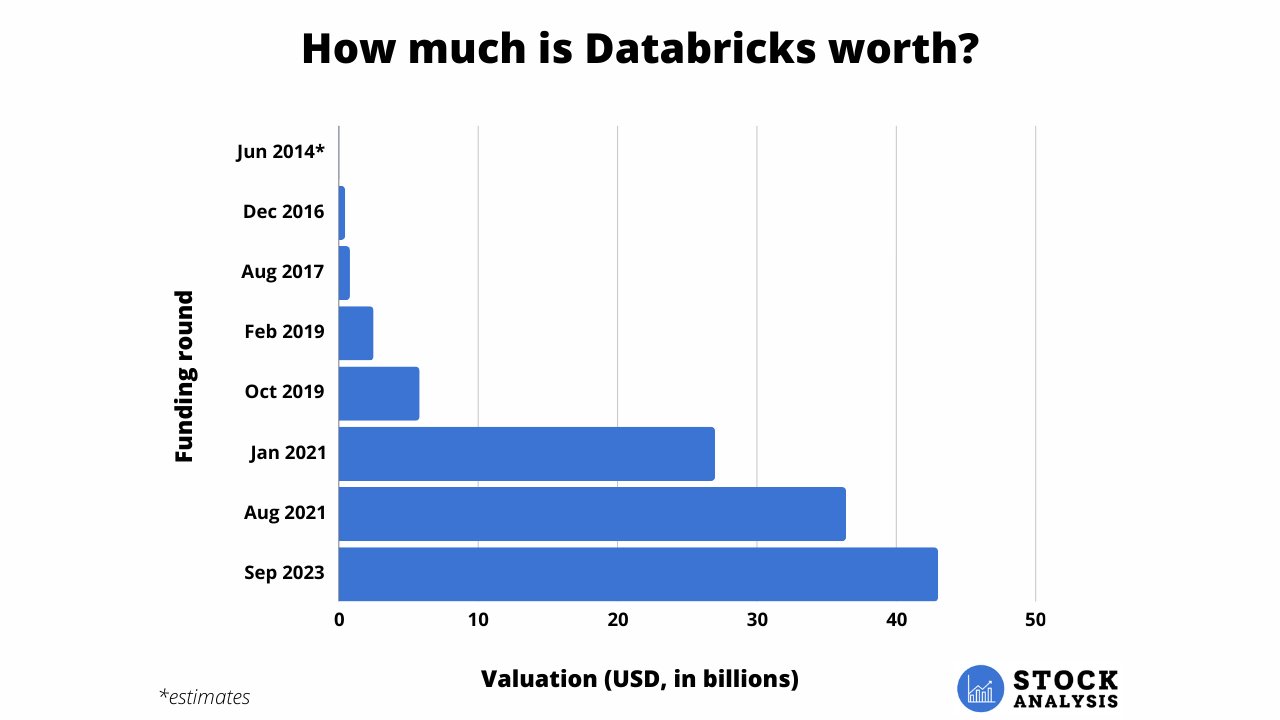

How to Buy Databricks Stock in 2025

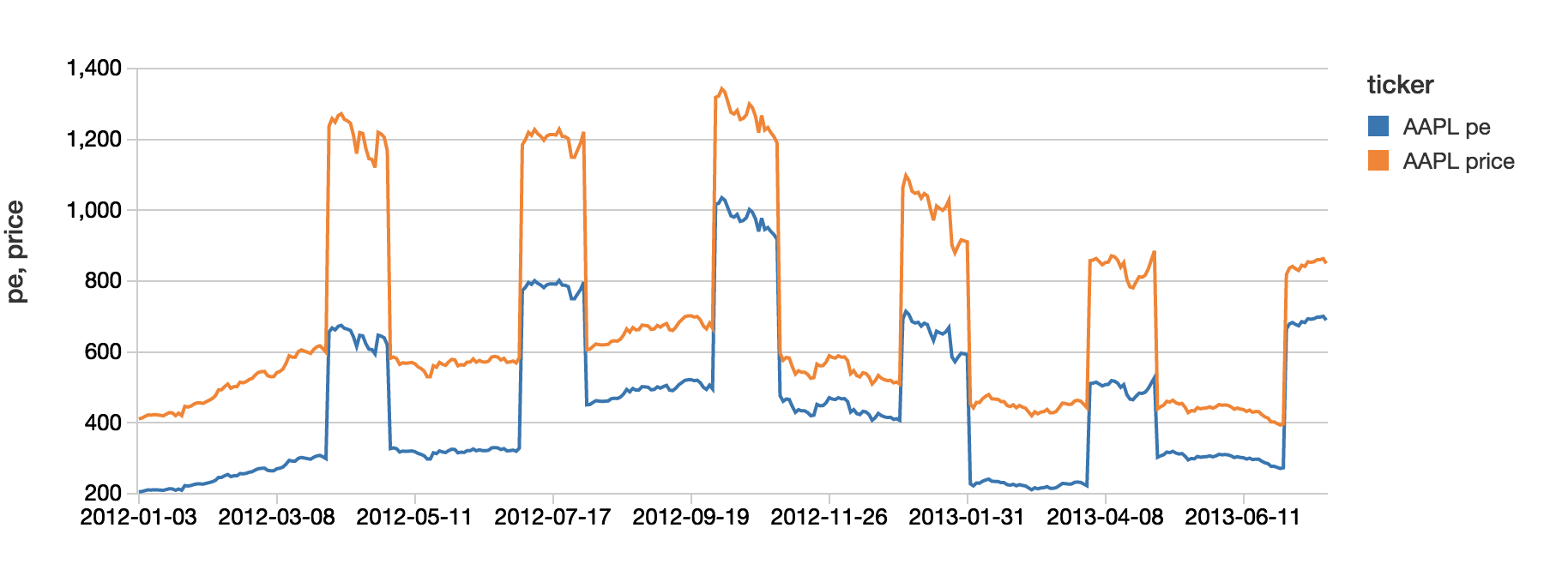

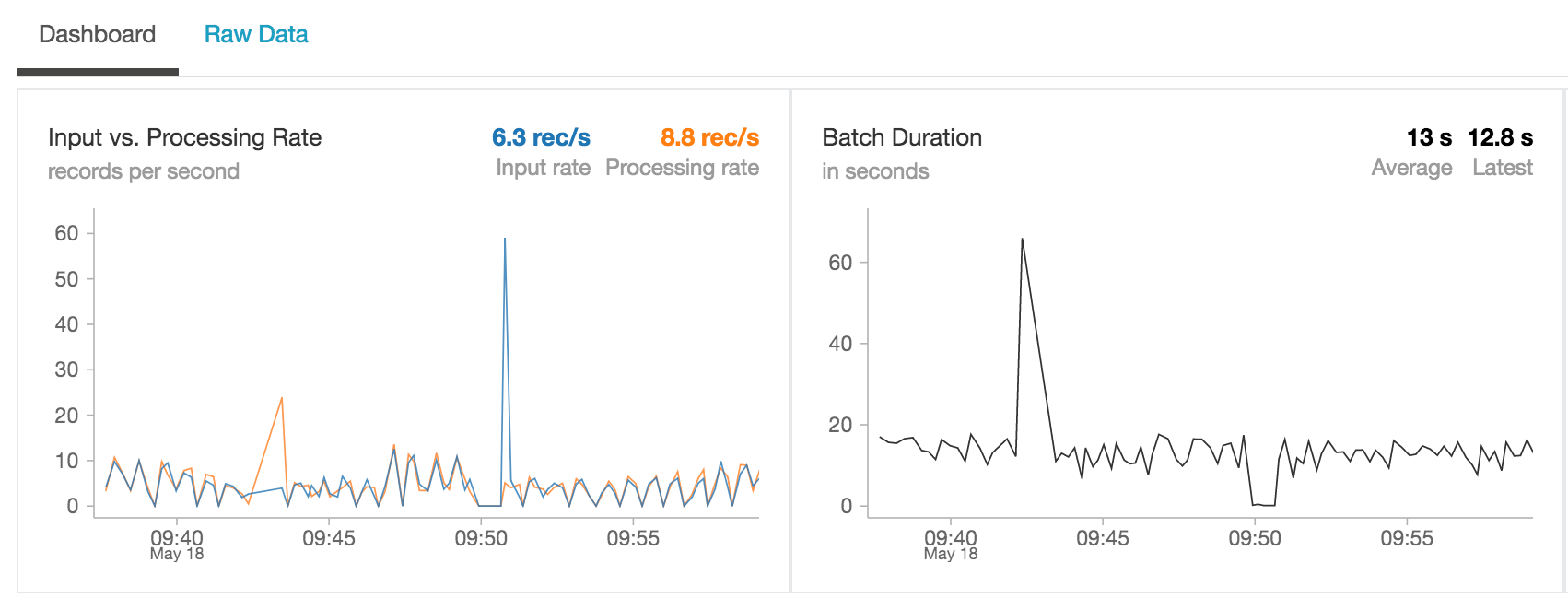

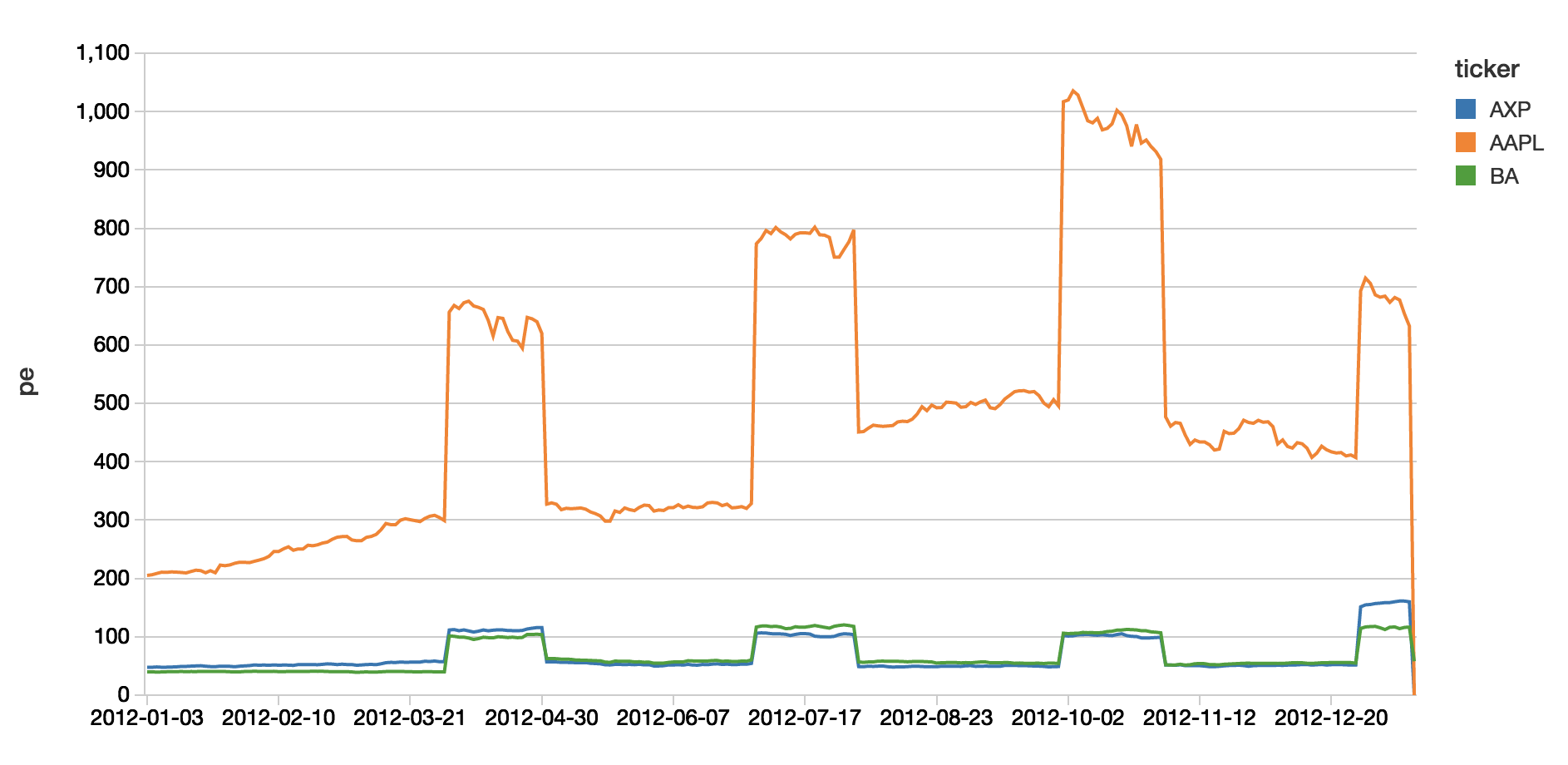

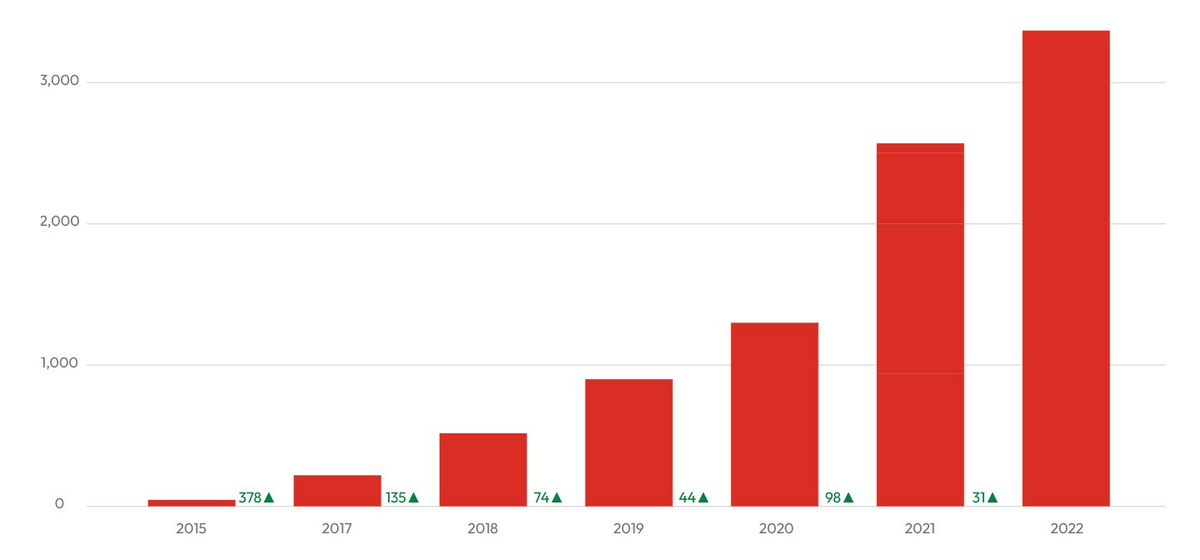

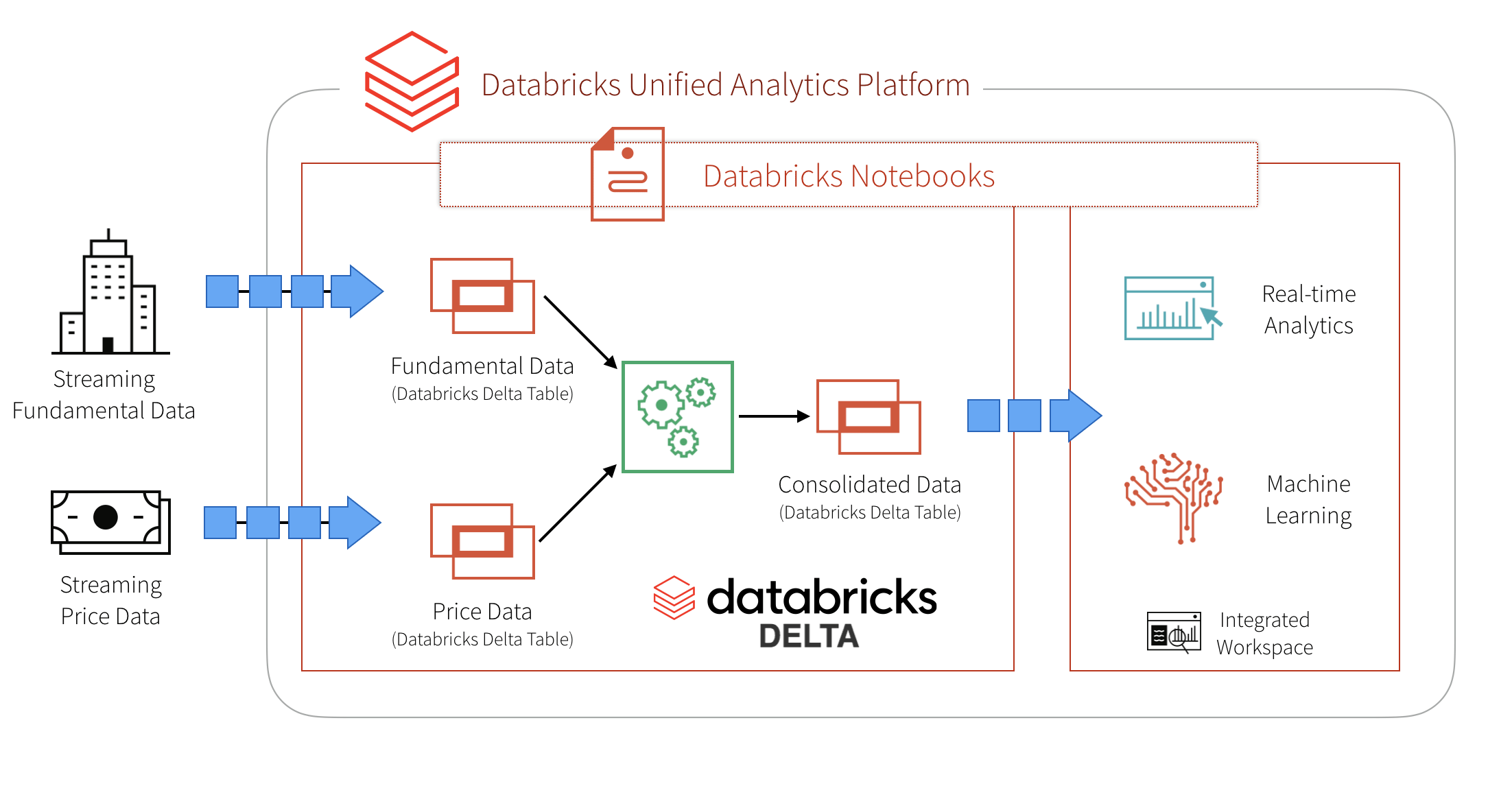

Simplify Streaming Stock Data Analysis Databricks Blog

Simplify Streaming Stock Data Analysis Databricks Blog

Simplify Streaming Stock Data Analysis Databricks Blog

Databricks Vantage Integrations

How to Buy Databricks Stock in 2025

How to Invest in Databricks Stock in 2024 Stock Analysis

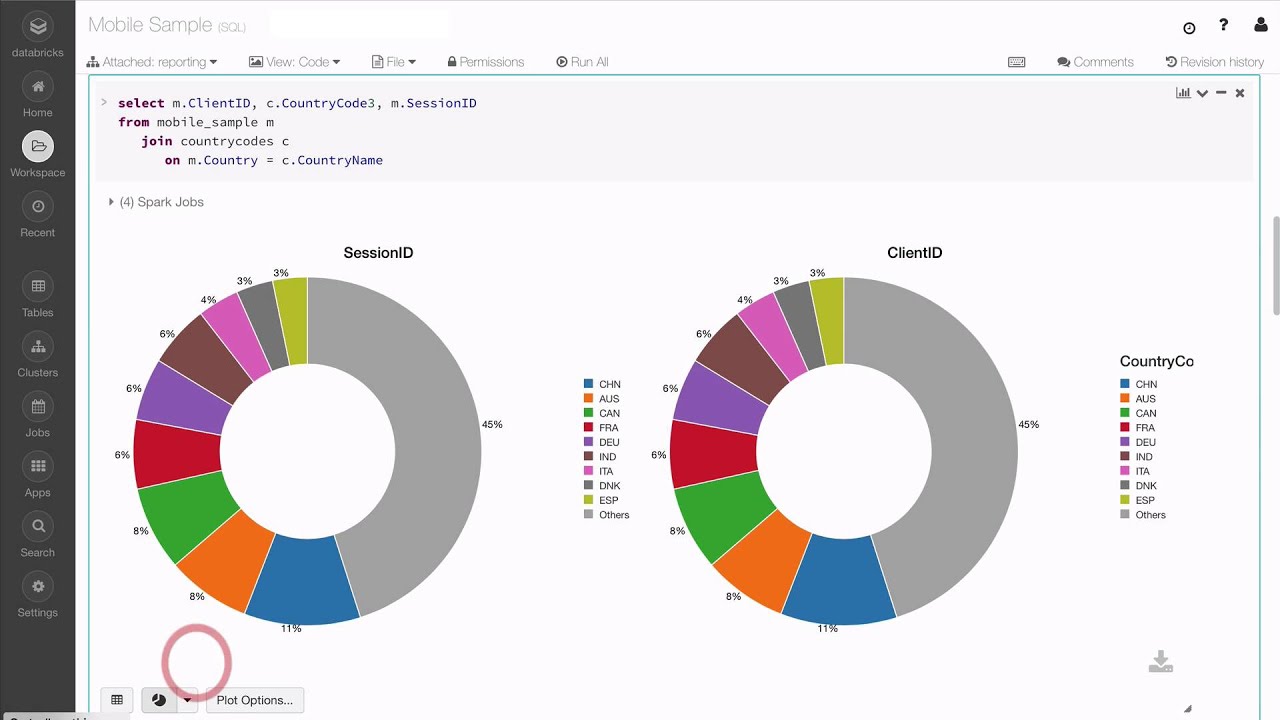

Visualizations in Databricks YouTube

Simplify Streaming Stock Data Analysis Using Databricks Delta Databricks Blog

While Databricks Manages The Metadata For External Tables, The Actual Data Remains In The Specified External Location, Providing Flexibility And Control Over The Data Storage.

The Guide On The Website Does Not Help.

Create Temp Table In Azure Databricks And Insert Lots Of Rows Asked 2 Years, 7 Months Ago Modified 6 Months Ago Viewed 25K Times

Below Is The Pyspark Code I Tried.

Related Post: